How Instagram Is Becoming A Safer Social Media Platform

Social media platforms have been getting a mix of press throughout the years. While advances in communication and technology open avenues for social and intellectual advancement, concerns over mental health issues and bullying are among a string of topics that have needed to be addressed by Instagram since its rise. Instagram has made strides in promoting positivity on the platform, but how are they addressing and minimising the use of Instagram for negative, harmful and destructive activity?

Here are the ways Instagram has been working to make the platform a safe environment for all…

2016 Updates:

- Hiding Comments

- Filtering Keywords

- Turning Off Comments

- Removing Followers

- Reporting Updates

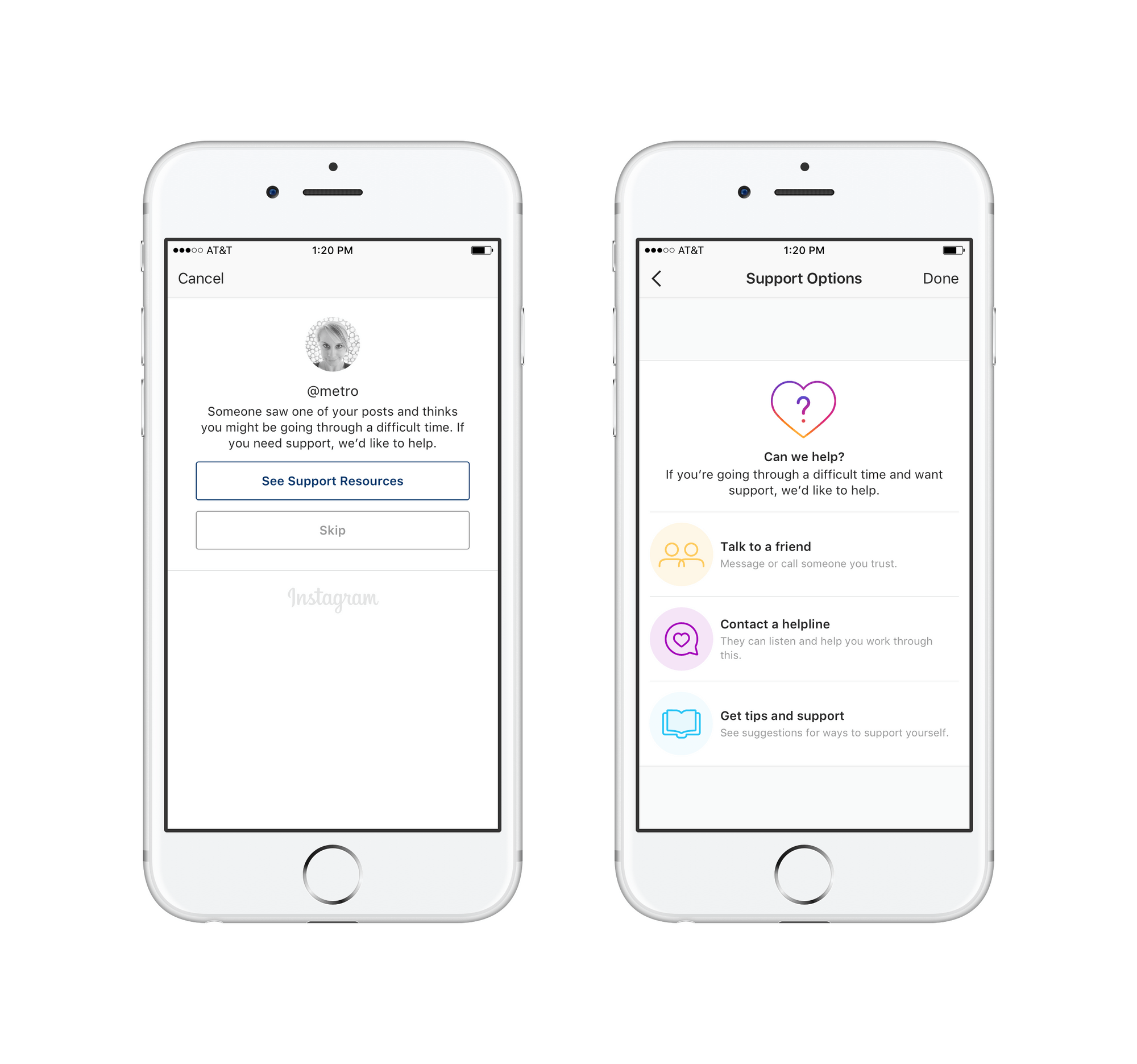

2016 saw the first major communications about safety from Instagram and their users, through the Instagram info centre. In September, Instagram introduced a feature allowing users to hide inappropriate comments by filtering through keywords. In December, a stream of new safety features were rolled out. These included a feature to turn off comments on a post, the ability to remove followers from a private account without having to block the user, and anonymous reporting of self-injury posts alongside help offered to the reported user.

2017 Updates:

- Screens Over Sensitive Content

- Reporting Intimate Images

- Mental Health Resources

- Private Accounts

- Blocking Users

- Blocking Offensive Comments

- Reducing Spam Comments.

- Anonymous Reporting

- Content Advisory Screens

2017 saw a string of stringent measures put in place, showing the proactive steps Instagram were taking to safeguard users against harmful and sensitive content. March welcomed the first ever screens over content that has been reported and confirmed to be sensitive. The following month Instagram reiterated the importance of reporting unsuitable content and announced they would be partnering with safety organisations to offer ‘resources and support’. This became even more evident in May when reported content would prompt mental health resources. The final health and safety announcement arrived in December when Instagram added content advisory screens when a user searches for a hashtag associated with harmful behaviour to animals or the environment, in efforts to protect wildlife and nature from exploitation.

Additional features were also added to give users more control over their own profiles. These included: choosing who sees posts; the ability to block anyone; further control over the comments you want to see; and the ability to report any abuse, bullying, harassment or impersonation. In June, Instagram introduced a filter for hiding offensive comments while additionally welcoming machine learning to reduce spam comments. By September, Instagram rolled out filters to block certain comments in more languages. The ability to report live video was also added.

2018 Updates:

- Mute Feature

- Anti-Bullying Comments Filter

- Notification Muting

- Activity Dashboard

- Resources For Parents

- Detecting Bullying With Machine Learning Technology

2018 welcomed the rise of social media channels taking their part in the welfare of user mental health seriously. Instagram dedicated the health and safety efforts of the year to anti-bullying measures and features that support positive mental health.

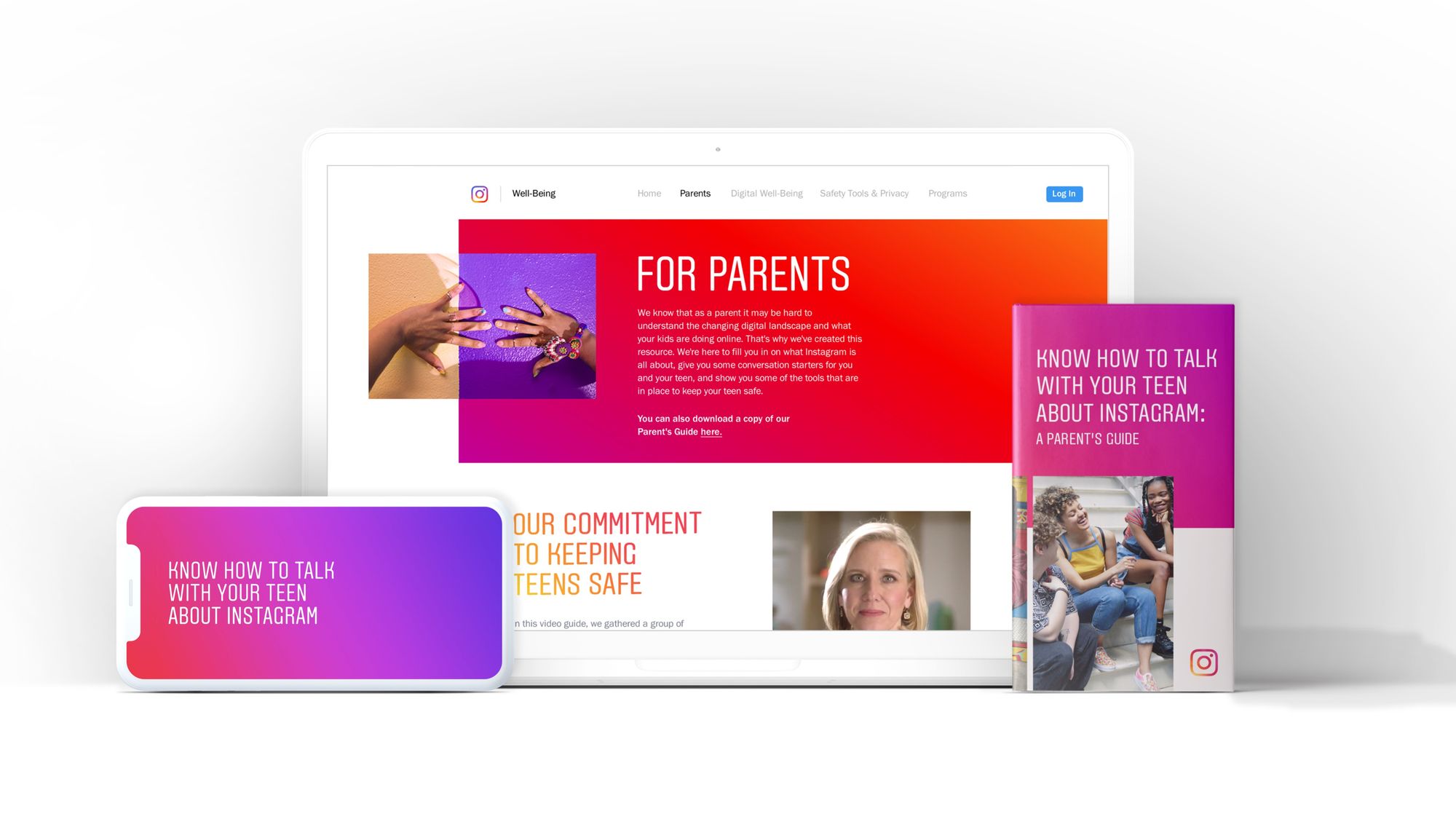

In May, Instagram announced the mute feature which allows users to control the posts they see in their feed. Muting a profile doesn’t alert the muted profile and allows a user to not see posts without the need to unfollow the profile. August saw some big changes to the attitude towards mental health on the platform. Instagram created a dashboard for users to be able to see how long they are spending on the platform. From there the user can mute notifications from Instagram for a period of time. Another huge triumph was the introduction of resources for the parents of teens using Instagram. Social media is such a new medium, so it is important to equip parents to be able to make positive choices around social media use and their families. The following month, Instagram announced it was using machine learning technology to proactively detect bullying in photos and their captions and send them for review.

2019 Updates:

- Addressing Harmful Imagery

- AI Alerts

- Restricting Accounts

By 2019, the building blocks of safety and security had been put in place to give users the best tools to remove, report and be protected from harm on Instagram. This year saw the very serious topics of self-harm and suicide come to the front of the conversation. Unfortunately, having a platform where anyone can share any content can allow space for harmful content. This is why Instagram stepped up and made it clear that this kind of content is not allowed, will not be shared and their efforts to support vulnerable people are being increased. Instagram are working with companies and additional technology to make Instagram safer and reduce this kind of content.

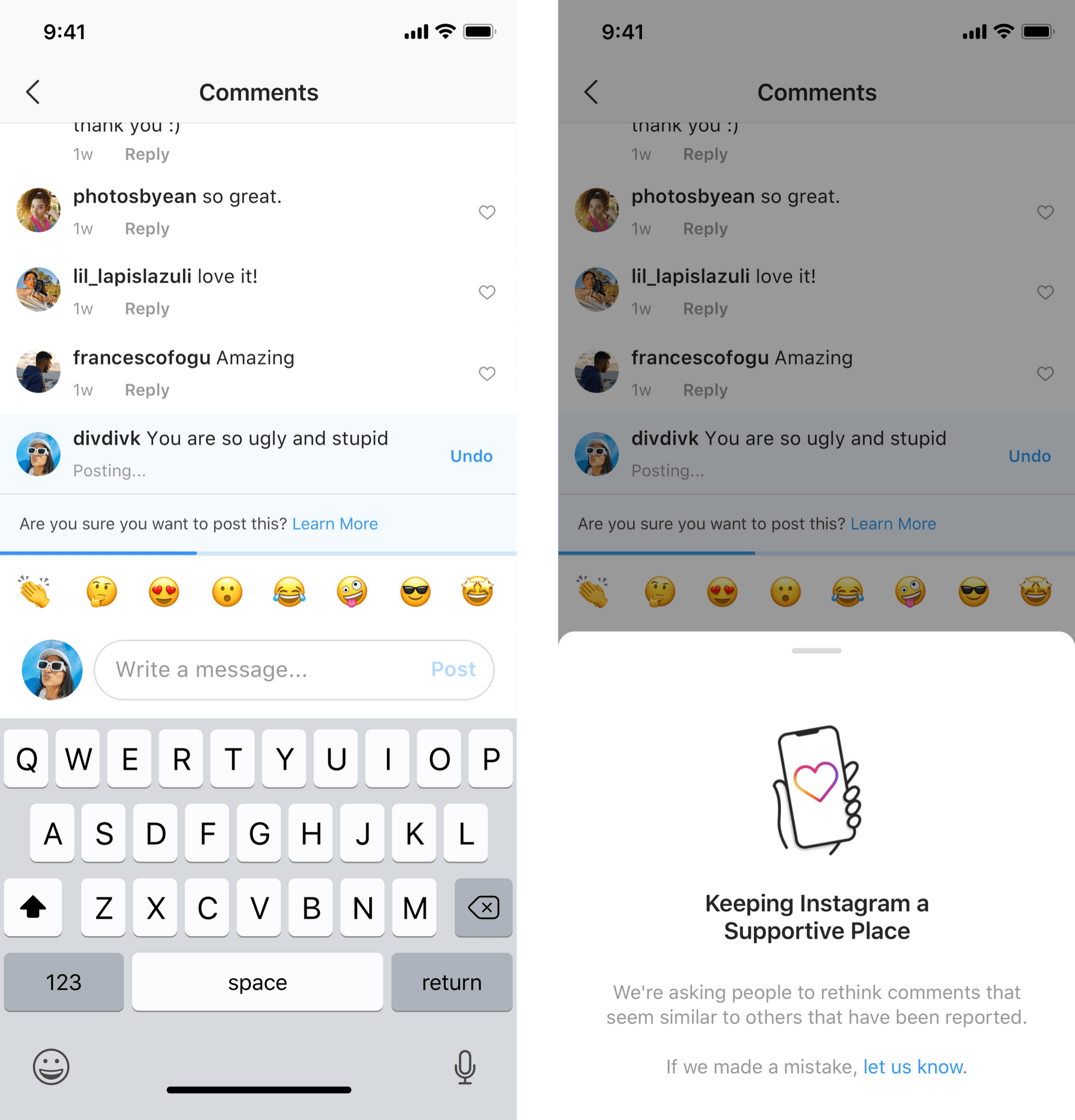

In July, Instagram announced AI alerts that prompt users to rethink their comment if it is considered to be offensive. Users were also given the option to restrict other users. This allows a user to limit who sees the restricted account’s comments on their content and stop the restricted account from seeing when they are online. This is an effort to protect the user from unwanted interactions.

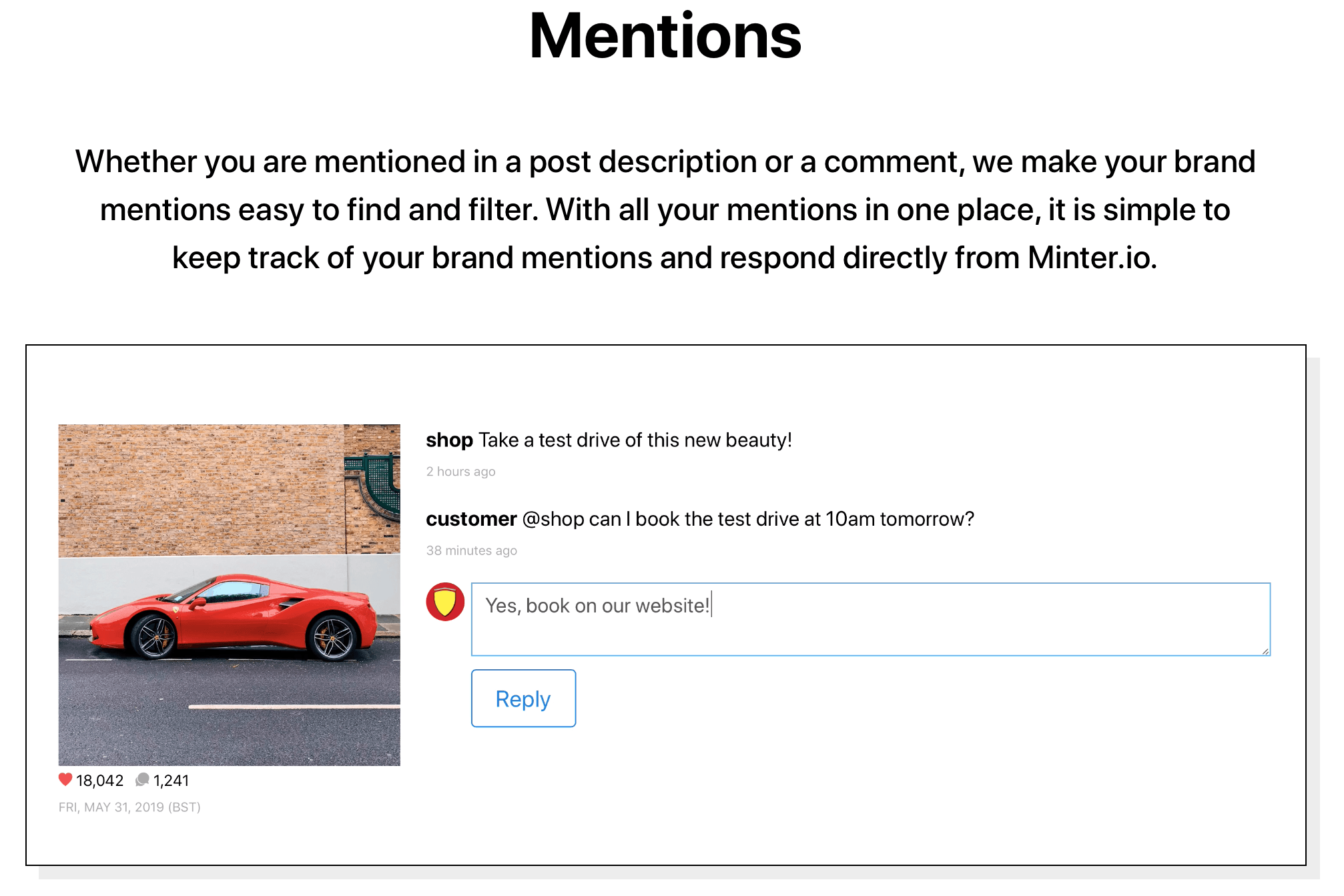

Minter.io has created a feature so that you can view and reply to any mention of your profile in any caption or comment anywhere on Instagram. This allows your business to simply see and respond to your mentions in one place, while giving you the opportunity to be proactive in limiting negativity or encouraging positivity associated with your business. Check it out today!